Multiple scrolls now show Greek letters

Who wants to help read them all?

TL;DR: Our generalist ink‑detection model and ~2 µm CT scans now reveal Greek letters across multiple scrolls. Early, noisy—but real signal. VC3D gets major usability upgrades. New $200K Unwrapping at Scale prize. Get involved.

Over the past few months we’ve pushed hard on a generalist ink‑detection model—a machine‑learning “ink hound,” as Youssef Nader dubbed it—trained to spot ink signals across many volumes, not just one. In parallel, we optimized our scanning protocol to capture ~2 µm pixel size volumes that better preserve the texture of features.

The bet was simple: better scans → better ink signal.

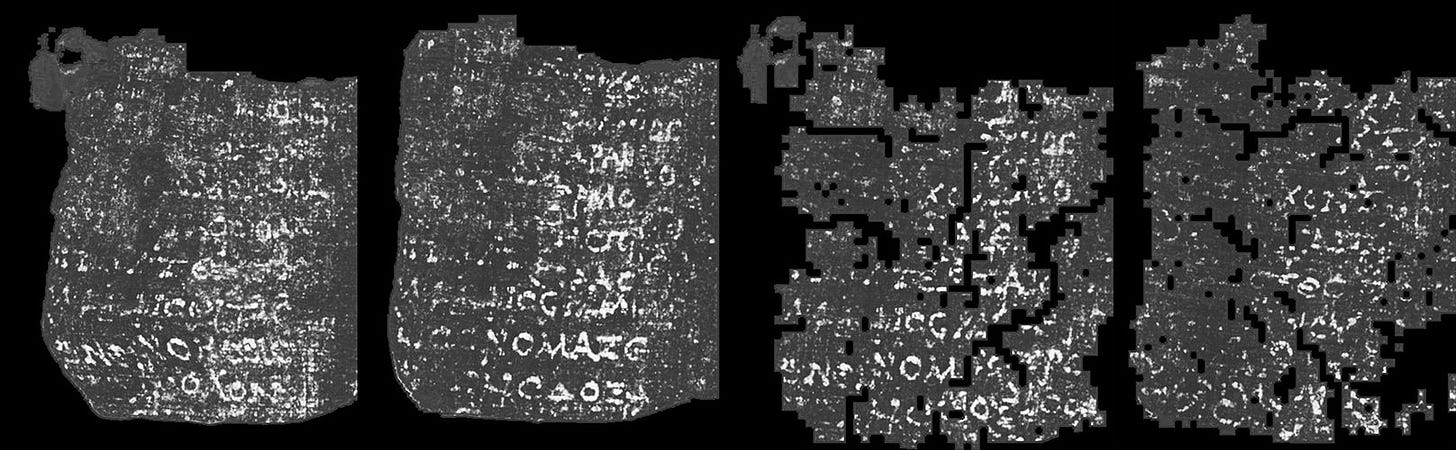

We focused on scroll fragments—detached pieces with visible writing on one face. Using IR images, we registered visible letters to their invisible, virtual‑unwrapped X‑ray equivalents.

Finally, we started training the models on a wide variety of different high resolution ink samples.

Our approach, in brief:

Train on exposed fragment surfaces (ground‑truthable ink).

Infer on hidden fragment layers; iteratively label to refine.

Generalize to closed scrolls; iterate labeling where needed.

This sort of curriculum learning assigns to models tasks with increasing difficulty. Indeed, ink on the hidden layers is believed to be harder to detect, due to being encapsulated in fibers, which are a source of blur, by both sides. Ink in unopened scrolls should be even harder to capture, due to the increased thickness of the material which the X-ray beam has to traverse.

Early results are very encouraging.

The ink‑detection models work on all tested fragments (including hidden layers in PHerc. 9B) and generalize to the ~2 µm scans of closed scrolls we acquired.

The team produced large approximate segmentations in these scans to run inference on the largest possible digitally unwrapped surface from each scroll. Because these meshes are coarse, ink detection is expected to struggle, but this allowed us to give a quick peek at the validity of the method, especially in the regions of interest on which the digital unwrapping pipeline performed well.

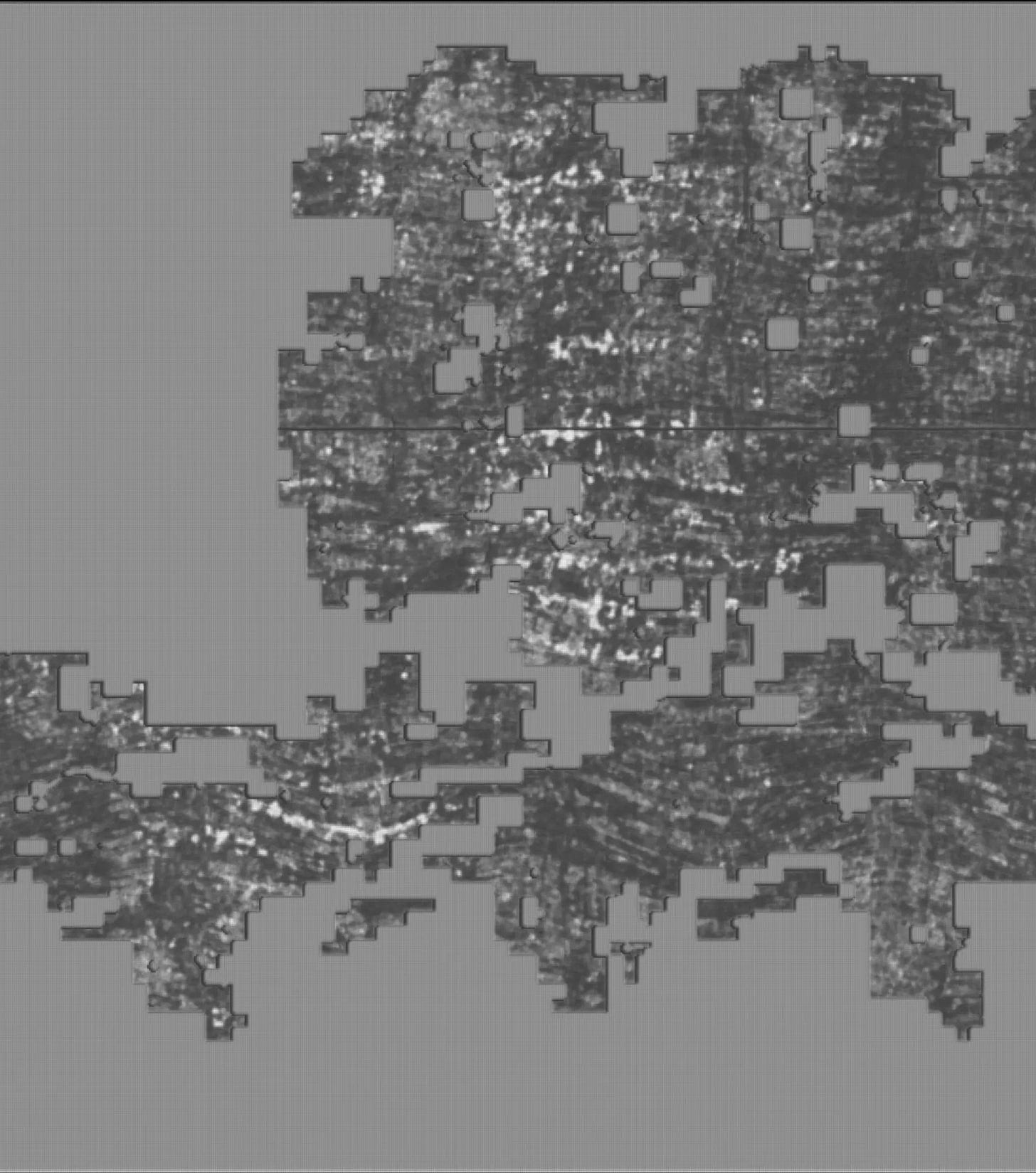

In PHerc. 814, faint letterforms appear from the predictions. The image is noisy because the mesh is inaccurate and the model is not specialized for this type of signal, but to the expert eye of the winner of the $700K 2023 Ink Detection Grand Prize these shapes are clearly emerging letters.

In PHerc. 841, despite warping in these work‑in‑progress renders, some Greek letters are visible.

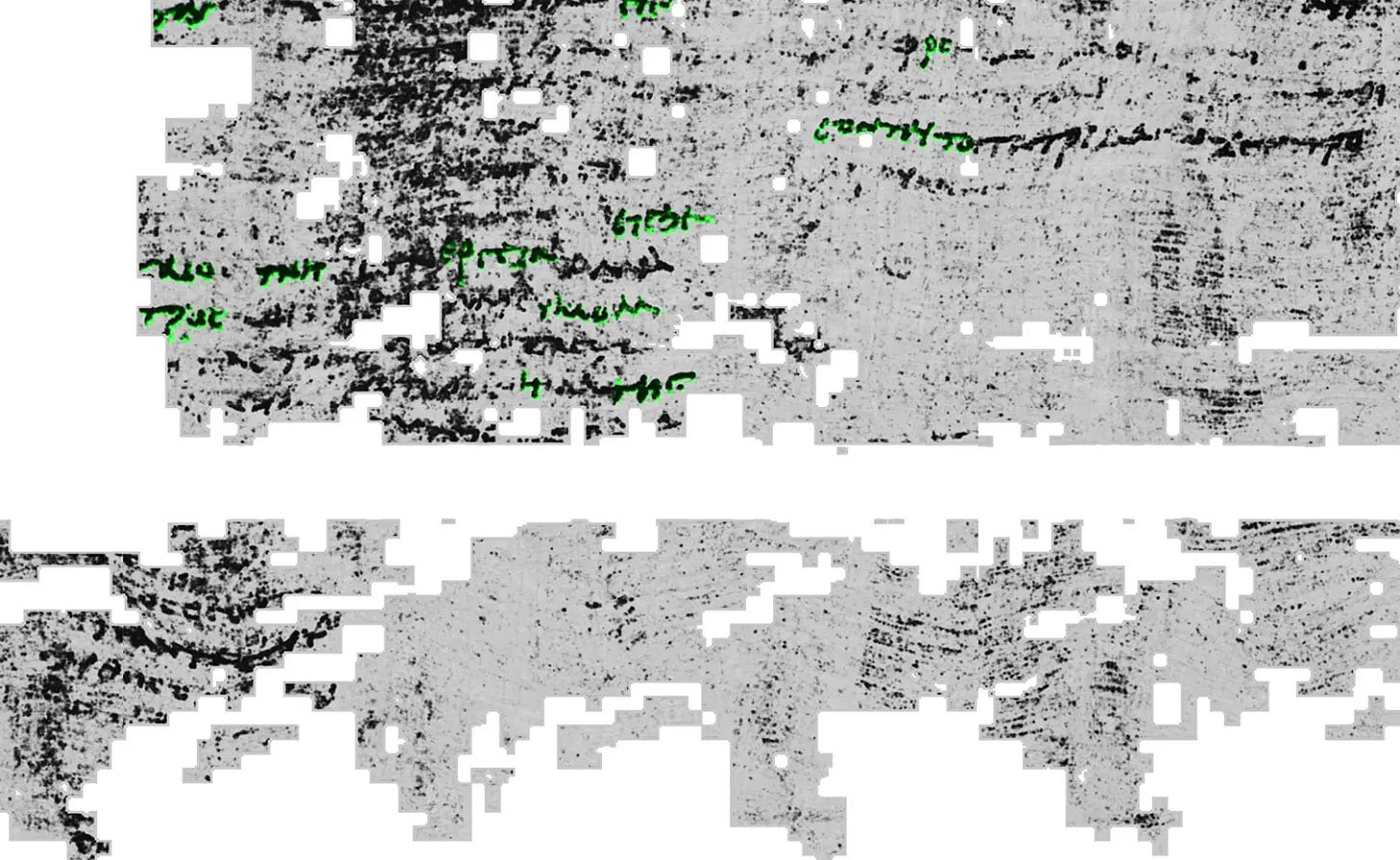

Multiple experiments have been performed on PHerc. 139, in which some regions were easier to unwrap. In this scroll, the ink signal is stronger, and some quick iterative labeling experiments (labels highlighted in green in Fig. 4) improved prediction quality.

Knowledge distillation to lower‑resolution scans

Working on higher-resolution data is more costly than on lower-resolution data, both in terms of data acquisition, storage and analysis. For this reason, we are performing experiments of knowledge distillation from models that learnt the signal on the 2 μm scans (high resolution) to the 9 μm scans (low resolution). The pixel size of the latter is comparable to those of Scroll 1 (PHerc. Paris 4) and Scroll 5 (PHerc. 172).

Fig. 5 shows the same region of interest as the lower‑left part of Fig. 4. The presence of letterforms is evident, but the signal is weaker.

A comparison of histogram-equalized views of the maximum-intensity composite for the same aligned region of interest shows that some features visible in the 2 µm scan are absent in the 9 µm scan.

For example, the letter τ (tau) is clearly visible in the 2 µm scan (lighter frame in the video), whereas only scattered ink spots are evident in the 9 µm scan (darker frame). We will investigate the causes of this phenomenon in the coming weeks.

What’s next

The reported results are all preliminary, and work is in progress. These images are comparable to the ‘first letters’ results from 2023 in Scroll 1. At the time, models could detect only a handful of letters, and a great deal of iterative labeling was necessary to reach the results of the 2023 Grand Prize. We expect the quality of ink detection to greatly improve with iterative labeling, allowing us to read these scrolls to a great extent once digital unwrapping is further improved—work that’s happening in parallel.

Want to help label or unwrap? Check the get‑started guide and Discord.

What’s new in VC3D

Additional loss functions now steer iterative patch growth in VC3D (kudos especially to Hendrik Schilling and Sean Johnson).

Normal alignment loss: penalizes the deviation from normals using a inverse squared distance weight from sparse normals with the normals based on a fast approximate skeletonization trace of surface prediction slices parallel to the the three main coordinate planes.

Snapping loss: carefully snaps quads to the surface prediction by pulling them towards the prediction if a neighboring quad is already close. Based on the same skeletonization as the normals.

Alignment to directions from fibers and structure tensor: penalizes deviation from a dense direction field that can be derived from fibers predictions or structure tensor orientations.

Symmetric Dirichlet energy term: regularizes the quads by applying a strong barrier to proposed solutions with areas that are too small, while discouraging shear and aspect ratio drift in a symmetric way.

Plus, human‑in‑the‑loop corrections reduce sheet switches and help the mesh adhere more tightly to the papyrus surface.

We also added many more GUI features!

Interactively correct patch growth

Semi‑automatic segmentation‑line adjustment

Semi-automatic adherence to papyrus surface

New: one‑click flattening in VC3D (SLIM‑flatten)

VC3D segments were famous for their waviness, as you might have noticed in Fig. 2, 3 and 4. This effect now can be directly corrected in the GUI by clicking SLIM-flatten!

A thorough walkthrough is in the new YouTube tutorial and the updated README!

Data dump!

A few months ago, we promised to share data more frequently. As it turns out, more data requires more organization.

Johannes Rudolph is setting up a well-structured platform to make this possible.

We’ll be releasing datasets gradually—though hopefully more often—at:

You can already find data related to PHerc. 9B (Fig. 1), including:

volumes,

meshes,

surface volumes,

ink-detection predictions (for some of the surface volumes).

Changes to the prize lineup

We redesigned the Read a Full Scroll prize, which conflated ink detection and virtual unwrapping. Instead, we’re introducing the Unwrapping at Scale Prize: $200K to the first team or individual who unwraps ≥ 70% of two different scrolls.

For more information and details on the requirements, visit our official prizes page.

Progress prizes

Despite many interesting submissions this week, none were eligible for a progress prize.

Please remember, we award contributions that:

Are released or open-sourced early. Tools released earlier have a higher chance of being used for reading the scrolls than those released the last day of the month.

Actually get used. We’ll look for signals from the community: questions, comments, bug reports, feature requests. Our Annotation Team will publicly provide comments on tools they use.

Are well documented. It helps a lot if relevant documentation, walkthroughs, images, tutorials or similar are included with the work so that others can use it!

The October progress‑prize form is now open—submit here.

this is very, very much like seismic interpretation. the techniques are very much the same. PaleoScan (Eliis) and GeoTeric pops to my mind in terms of the work required. have you guys investigated it?

Does anyone know what "iteratively labeling" means?